When you manage instances of SQL Server Analysis Services (SSAS) or Azure Analysis Services, you can modify the instance’s memory settings. The default settings are usually right for generic configurations; that said, you might want to consider alternative settings to optimize a heavier workload, dedicated machines, or to solve memory errors in particular conditions. The goal of the article is to provide practical tips to optimize the memory settings for specific scenarios. Unless otherwise specified, the settings apply to all the versions of Analysis Services (AS).

The article does not explain how Analysis Services uses memory. Chapter 14 of Tabular Modeling in SQL Server Analysis Services includes a complete explanation of the memory management in Analysis Services.

DISCLAIMER: Carefully read the documentation before making any changes to your memory settings. Before and after any change, always measure performance and memory metrics and revert to the original memory settings if the changes applied do not produce the expected results.

Accessing memory settings

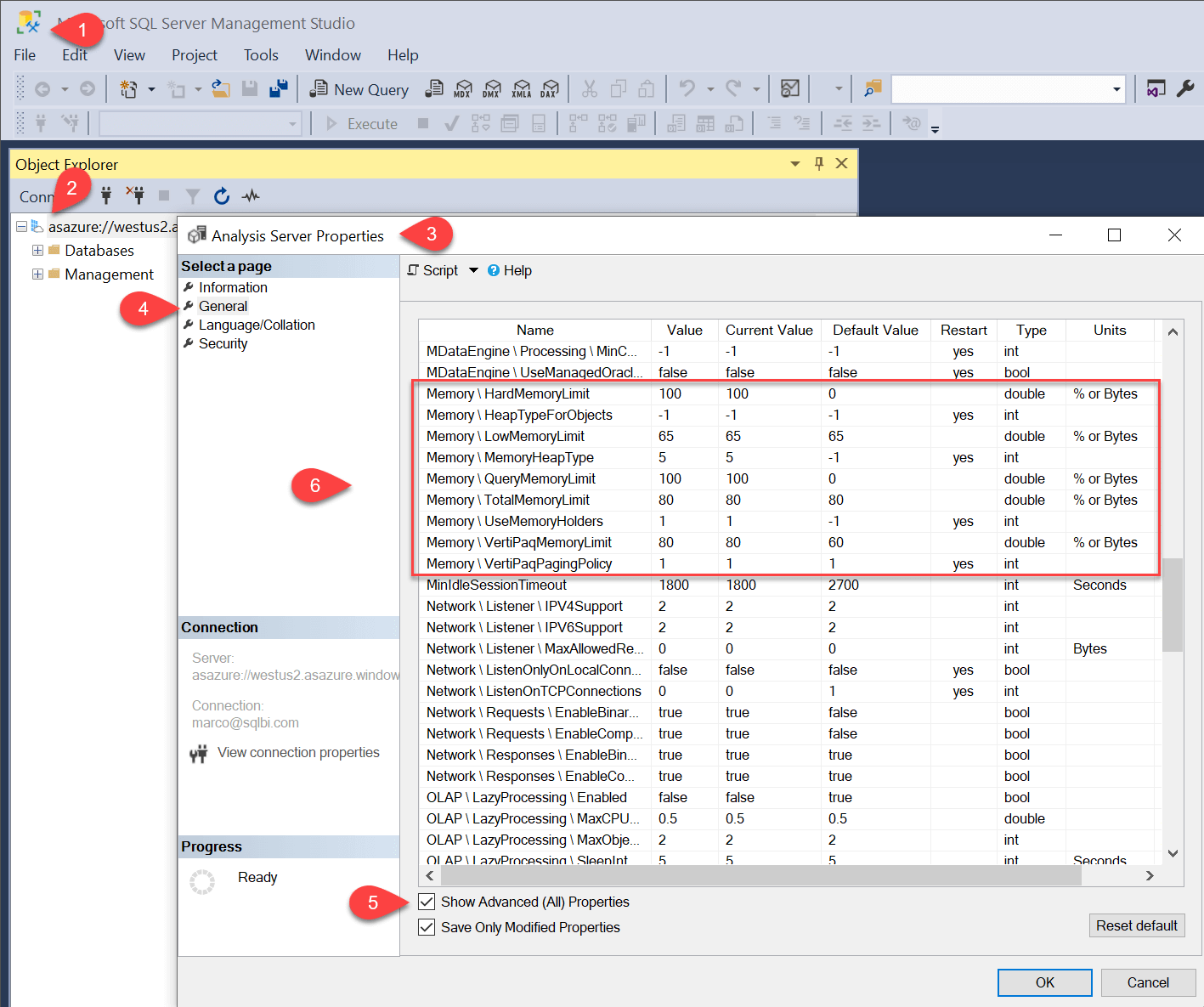

In order to access memory settings, you have to:

- Open SQL Server Management Studio (SSMS),

- Connect to the Analysis Services instance using an administrative account,

- Right-click the server name in Object Explorer and choose Properties from the context menu,

- Select the General page,

- Check the Show Advanced (All) Properties checkbox,

- Scroll to the settings in the Memory group.

HardMemoryLimit

This is the maximum memory that AS can allocate. If AS exceeds the hard memory limit, the system aggressively kills the active sessions to reduce memory use. Sessions killed for this reason receive an error that explains the cancellation due to memory pressure. With VertiPaqPagingPolicy at 0, it is also the limit for the maximum working set of the process. If HardMemoryLimit is set to 0, it uses a default value midway between the TotalMemoryLimit and the total physical memory – or the total virtual address space, if you are on a 32-bit machine on which the physical memory exceeds the virtual memory.

Tip: This value cannot be changed on Azure Analysis Services, where it uses all the physical memory available. The same setting (99 or 100) can be used on an SSAS instance exclusively dedicated to Analysis Services.

HeapTypeForObjects

Choose the heap system to allocate objects of a fixed size, such as instances of classes in C++. C++ is the language used by Microsoft to write Analysis Services. The possible values are as follows.

| Value | Description |

| -1 | The engine decides the setting to use. |

| 0 | Uses the Windows Low-Fragmentation Heap (LFH). |

| 1 | Uses the custom heap implementation of Analysis Services. |

| 3 | Each object has its own Analysis Services Heap. |

Tip: The suggestion is not to change this setting from the default value -1. Older SSAS versions (2012/2014) would require specific settings to avoid memory fragmentation issues. You should set the value to -1 if you have upgraded from older SSAS versions.

LowMemoryLimit

This is the point where the system starts to clear caches to free up memory. As memory use increases above the low memory limit, SSAS becomes more aggressive about evicting the cached data until it hits the high/total memory limit. At this point, it evicts everything that is not pinned.

AS does not release the allocated memory until this threshold is reached.

Tip: Decrease this value on servers where multiple instances of SSAS are running, or when other services compete with SSAS for memory, and you want to release memory from SSAS thus reducing the amount of paging produced by other services. The 65 default value is right for servers dedicated to AS. You might consider increasing this setting to 70 or 75 for dedicated servers with a large amount of RAM available (more than 100 GB).

MemoryHeapType

Choose the heap system to allocate objects of dynamic sizes, such as strings, vectors, bytes, and so on. The possible values are as follows.

| Value | Description |

| -1 | The engine decides the setting to use. |

| 1 | Uses the custom heap implementation of Analysis Services (AS). |

| 2 | Uses the Windows Low-Fragmentation Heap (LFH). |

| 5 | Hybrid allocator. This allocator uses Windows LFH for <= 16 KB allocations and the AS Heap for >16 KB allocations. |

| 6 | Intel Threading Building Blocks (TBB) allocator. |

Tip: The suggestion is not to change this setting from the default value -1. Only SSAS versions earlier than 2016 SP1 might require adjusting the memory settings to avoid memory leak and performance issues caused by the allocation of large memory blocks on Windows LFH.

QueryMemoryLimit

Control the memory used during a query. It is not available in SSAS versions prior to 2019. In SSAS 2019, this setting limits the materialization of intermediate DAX query results, and it does not apply to MDX queries.

In Azure Analysis Services, this setting is not limited only to memory spools and it applies to all the memory utilized by both DAX and MDX queries.

The value 0 does not activate this limitation.

Tip: Reduce this limit if many users experience errors when running queries because the memory is consumed by individual queries consuming a large amount of memory at query time. Long-running queries and excessive materialization can cause this. By applying a query limit, the long-running query consuming too much memory might fail earlier, reducing the risk of slowing down or stopping other queries that do not have high memory requests.

TotalMemoryLimit

If memory use exceeds the total memory limit, the memory manager evicts all the cached data that is not currently in use. TotalMemoryLimit must always be less than HardMemoryLimit.

Tip: This value cannot be changed on Azure Analysis Services, where it depends on the service plan. Decrease this value on servers where multiple instances of SSAS are running, or when other services compete with SSAS for memory, and you want to release memory from SSAS thus reducing paging produced by other services. The 80 default value is appropriate for servers dedicated to AS. You might consider increasing this setting to 90 or 95 for dedicated servers with a large amount of RAM available (more than 100 GB).

UseMemoryHolders

It is not documented yet. We assume it is for internal use only.

VertiPaqMemoryLimit

If you choose to set VertiPaqPagingPolicy to 0, VertiPaqMemoryLimit defines the total amount of memory VertiPaq can lock in the working set – that is, the total that can be used for in-memory databases. Remember that AS might use more memory for other reasons.

When VertiPaqPagingPolicy is set to 1, it defines a limit for the physical memory that is used by VertiPaq and pinned in memory. This allows paging for the remaining memory (virtual committed memory) to go above this limit.

Tip: If you want to reduce the memory for an instance of SSAS on a server where other services are also competing for the memory available, it makes sense to set VertiPaqMemoryLimit to a number lower than LowMemoryLimit.

VertiPaqPagingPolicy

This setting controls the paging of data managed by the VertiPaq storage engine.

| Value | Description |

| 0 | Disables paging. If memory is insufficient, processing fails with an out-of-memory error. |

| 1 | Enables paging to disk using the operating system page file (pagefile.sys). |

When you set it to 1, only hash dictionaries are locked in memory. Data pages can be flushed to disk. This enables VertiPaq to use more memory than is available. Keep in mind that if paging occurs, performance suffers a severe degradation.

The default is 0 (paging disabled) for Azure Analysis Services and 1 (paging enabled) for SSAS. Because the consequences of picking one or the other are severe, it is useful to recap the behavior of AS in the two modes.

If paging is off:

- The AS working set size is adjusted to use the VertipaqMemoryLimit – so physical memory is guaranteed to be available for the Vertipaq data.

- If AS has enough privileges, it may lock the Vertipaq memory into physical memory – if the memory is not available, the allocation might fail.

- With a default value of 20%, there is a limit of 5 SSAS instances that can run on the same server.

- When the process memory exceeds the LowMemoryLimit and approaches the TotalMemoryLimit, the cleaner starts cleaning caches and eventually the system will go into panic mode and start cancelling sessions.

If paging is on:

- The AS working set size is adjusted to grow up to VertipaqMemoryLimit.

- The cleaner process pretends like the Vertipaq allocations do not exist – it does not start cleaning before LowMemoryLimit +

- In these conditions, the AS process is heavily overcommitted.

- When there are additional memory requests, AS explicitly tells Windows to page out some of the Vertipaq memory, freeing up the working set of the process for other memory needs.

- When AS needs the paged out data, it is paged in automatically by Windows, which pages out some other data.

Tip: During processing, the VertiPaq memory required could be twice the processed objects’ memory. This can be a constraint, especially on Azure Analysis Services instances running with a service plan that does not have double the memory size of the largest database. By enabling VertiPaq paging, AS can complete the processing even though there is not enough memory to keep the existing objects and the new objects in memory. Fair use of paging guarantees the completion of data processing scheduled to run during non-peak hours, while keeping the existing data available to the user – albeit with slower performance. As an alternative, you can consider a backup of the existing database and a data clear before data processing. In that case, during the processing, the database is not available to the users.

Marco Russo

Marco Russo